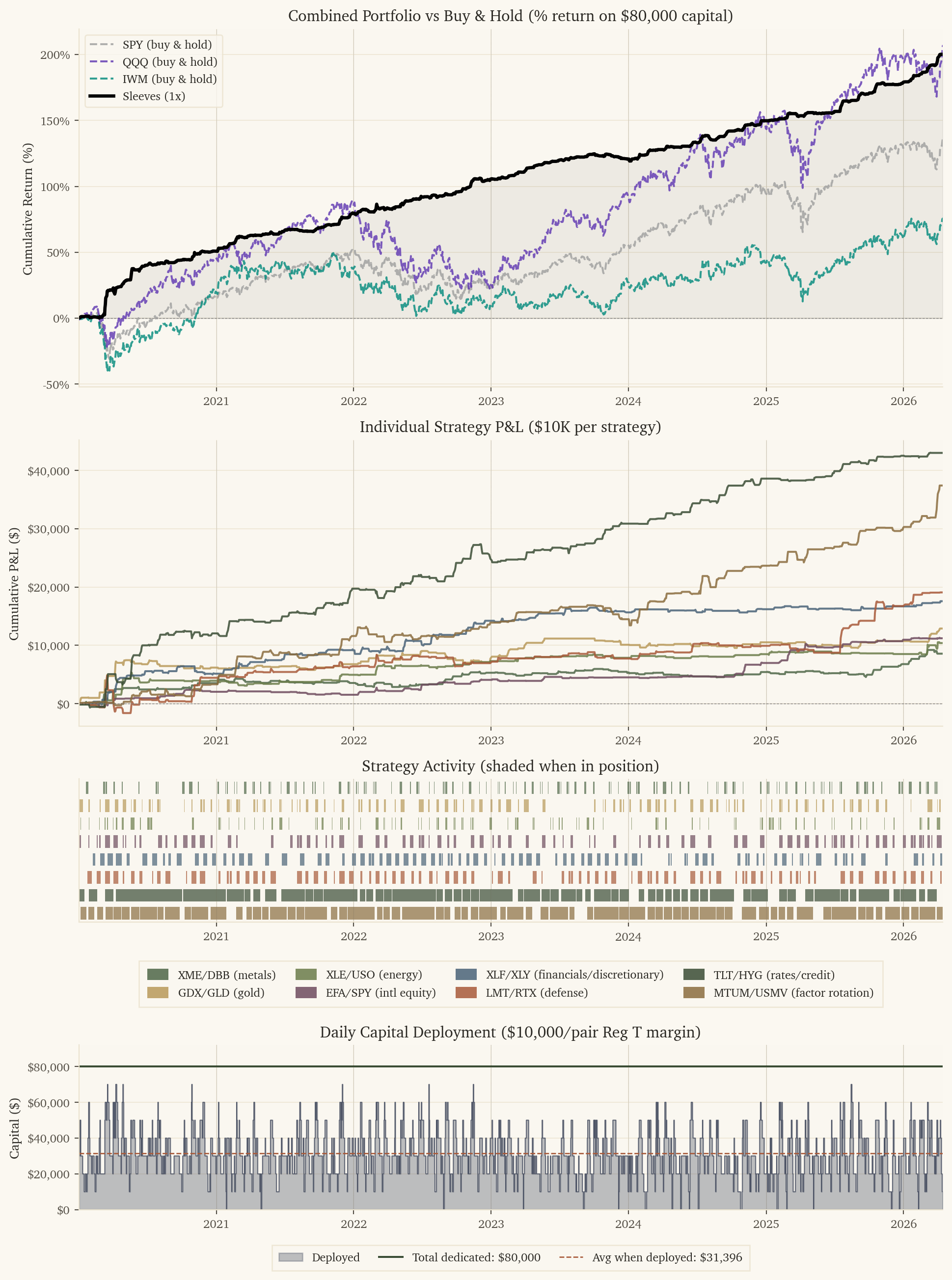

# Capital per pair trade: $10K long + $10K short = $10K margin (Reg T 50%)

MARGIN_PER_PAIR = 10000

from matplotlib.gridspec import GridSpec

fig = plt.figure(figsize=(10, 13.5), constrained_layout=True)

gs = GridSpec(5, 1, height_ratios=[2.5, 2, 1, 0.4, 1.15], figure=fig)

ax1 = fig.add_subplot(gs[0])

ax_strat = fig.add_subplot(gs[1], sharex=ax1)

ax2 = fig.add_subplot(gs[2], sharex=ax1)

ax_legend = fig.add_subplot(gs[3])

ax_legend.axis('off') # spacer row reserved for the strategy legend

ax3 = fig.add_subplot(gs[4], sharex=ax1)

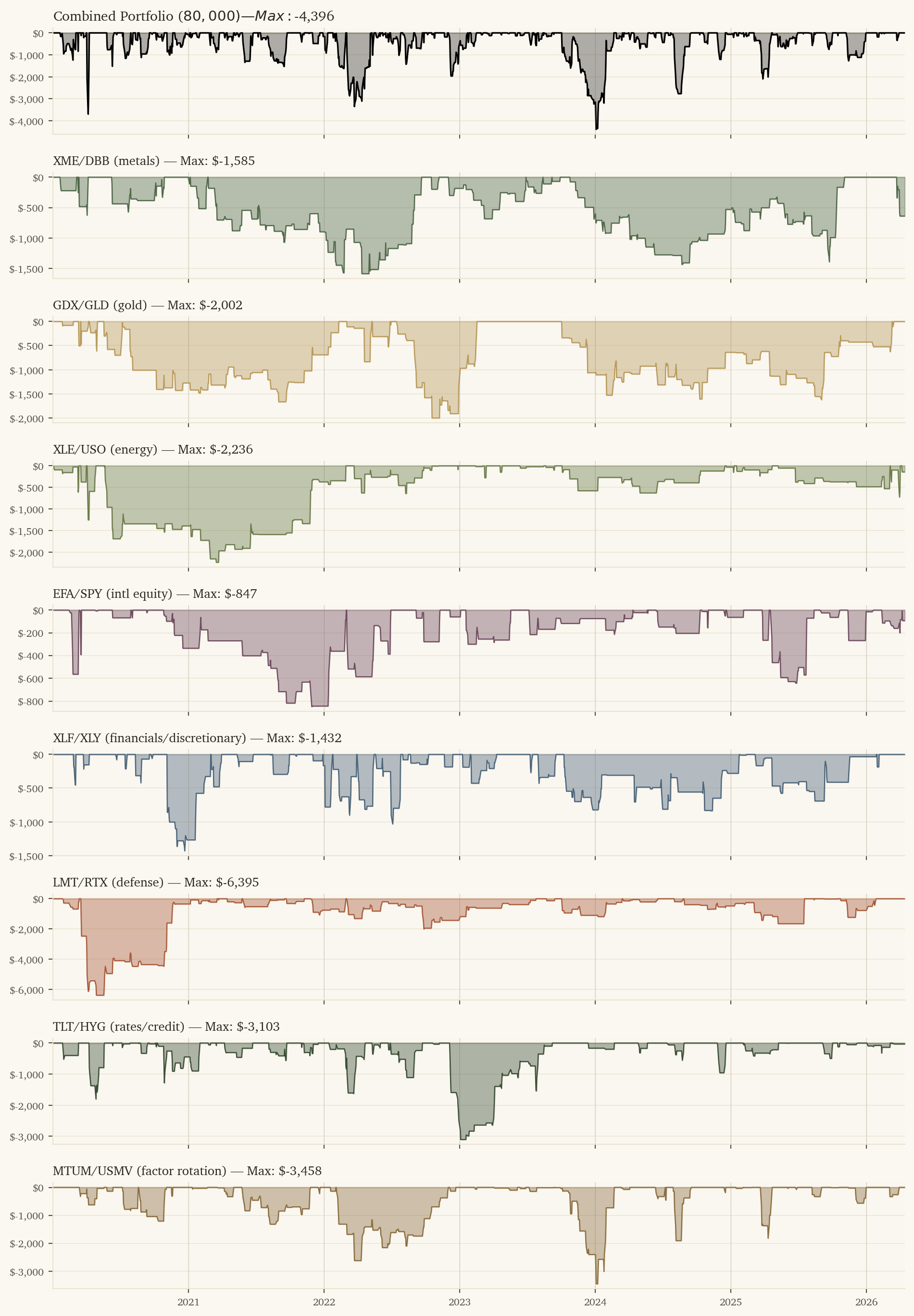

# Panel 1: Combined Portfolio (two allocation methods) vs benchmarks — in percent

for ticker, (bdf, label, color) in benchmarks.items():

ax1.plot(bdf['dt'], bdf[f'{ticker}_cum'] / total_capital * 100,

color=color, linewidth=1.5, linestyle='--', alpha=0.8, label=label, zorder=3)

ax1.plot(port['dt'], port['port_cum'] / total_capital * 100,

color='black', linewidth=2.5, label='Sleeves (1x)', zorder=5)

ax1.fill_between(port['dt'], 0, port['port_cum'] / total_capital * 100, alpha=0.05, color='black')

ax1.axhline(y=0, color='gray', linewidth=0.5, linestyle='--')

for yr in range(port['dt'].dt.year.min(), port['dt'].dt.year.max() + 2):

for ax in [ax1, ax_strat, ax2, ax3]:

ax.axvline(x=pd.Timestamp(f'{yr}-01-01'), color='gray', linewidth=0.3, linestyle=':')

ax1.set_ylabel('Cumulative Return (%)')

ax1.set_title(f'Combined Portfolio vs Buy & Hold (% return on ${total_capital:,} capital)')

ax1.yaxis.set_major_formatter(plt.FuncFormatter(lambda x, _: f'{x:.0f}%'))

ax1.legend(loc='upper left', fontsize=9)

# Panel 2: Individual strategies (own scale) — colors shared with activity below

for s in strat_names:

ax_strat.plot(port['dt'], port[f'{s}_cum'], color=strat_colors[s], linewidth=1.5,

alpha=0.85, label=strat_labels[s])

ax_strat.axhline(y=0, color='gray', linewidth=0.5, linestyle='--')

ax_strat.set_ylabel('Cumulative P&L ($)')

ax_strat.set_title('Individual Strategy P&L ($10K per strategy)')

ax_strat.yaxis.set_major_formatter(plt.FuncFormatter(lambda x, _: f'${x:,.0f}'))

# Panel 2: Strategy activity — one horizontal lane per strategy, shaded when active

# Active = pred != 0 OR holding open position

hold_periods = {'xme_dbb': 1, 'gdx_gld': 2, 'xle_uso': 1, 'efa_spy': 3, 'xlf_xly': 3,

'lmt_rtx': 3, 'tlt_hyg': 10, 'mtum_usmv': 10}

# For each strategy, compute "in position" days (active OR holding from prior entry)

for s in strat_names:

hold = hold_periods[s]

active = port[f'{s}_active'].astype(bool).values

# Forward-fill: a position entered on day i is held for `hold` days

in_position = np.zeros(len(active), dtype=bool)

for i in range(len(active)):

if active[i]:

for j in range(hold):

if i + j < len(active):

in_position[i + j] = True

port[f'{s}_in_position'] = in_position

# Plot one horizontal lane per strategy

lane_height = 0.7

for idx, s in enumerate(strat_names):

y_pos = len(strat_names) - 1 - idx # top-to-bottom order

in_pos = port[f'{s}_in_position'].values

# Find contiguous runs of activity for cleaner rendering

in_run = False

run_start = None

for i, active in enumerate(in_pos):

if active and not in_run:

run_start = port['dt'].iloc[i]

in_run = True

elif not active and in_run:

run_end = port['dt'].iloc[i]

ax2.barh(y_pos, run_end - run_start, left=run_start,

height=lane_height, color=strat_colors[s], alpha=0.7)

in_run = False

if in_run:

run_end = port['dt'].iloc[-1] + pd.Timedelta(days=1)

ax2.barh(y_pos, run_end - run_start, left=run_start,

height=lane_height, color=strat_colors[s], alpha=0.7)

ax2.set_yticks([])

ax2.set_title('Strategy Activity (shaded when in position)')

ax2.set_ylim(-0.5, len(strat_names) - 0.5)

ax2.grid(axis='y', visible=False)

ax2.set_ylabel('')

# Shared legend on its dedicated spacer axis — color swatches for each strategy

legend_handles = [Patch(facecolor=strat_colors[s], alpha=0.85, label=strat_labels[s])

for s in strat_names]

ax_legend.legend(handles=legend_handles, loc='center', ncol=4, frameon=True,

fancybox=False, fontsize=9, columnspacing=1.6, handlelength=1.8,

handleheight=1.1, borderpad=0.7)

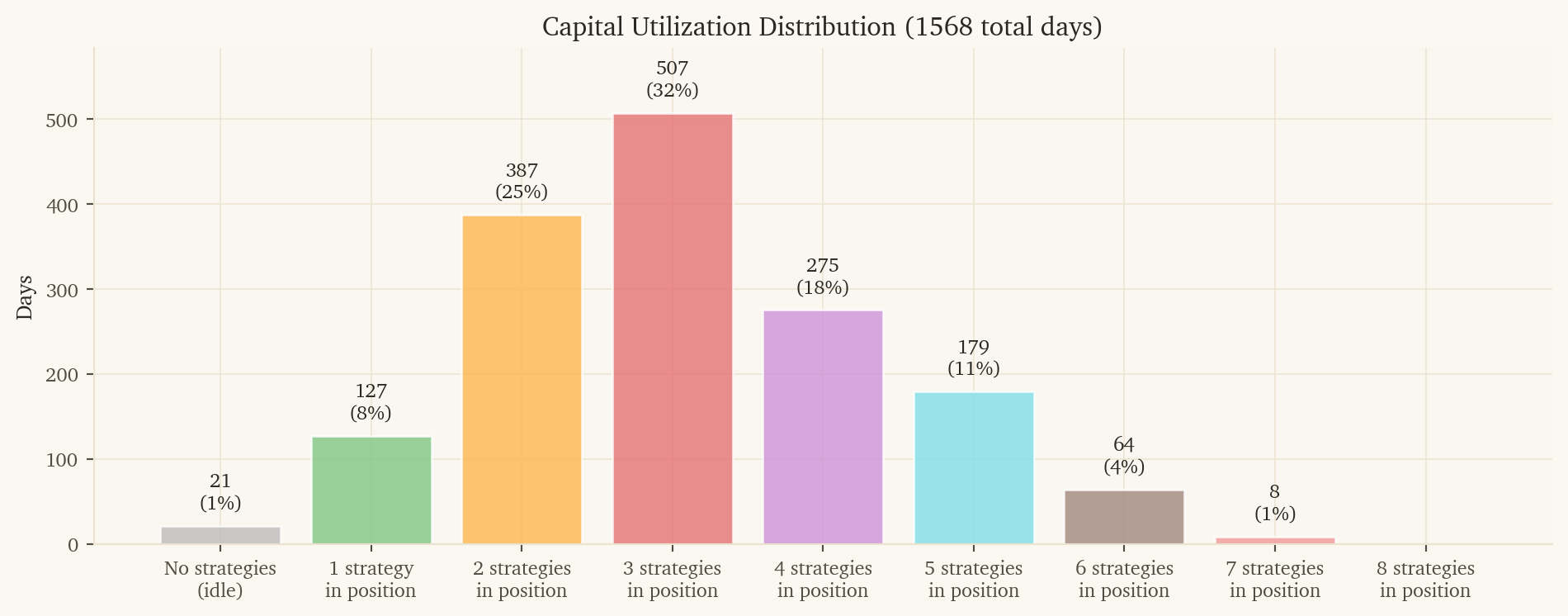

# Panel 3: Capital deployed per day

# Each strategy has $10K dedicated capital sitting in account at all times.

# When active (in position), capital is deployed; otherwise it's idle cash.

port['n_in_position'] = sum(port[f'{s}_in_position'].astype(int) for s in strat_names)

port['capital_deployed'] = port['n_in_position'] * MARGIN_PER_PAIR

ax3.fill_between(port['dt'], port['capital_deployed'], 0, color=palette('slate'), alpha=0.35, step='mid', label='Deployed')

ax3.plot(port['dt'], port['capital_deployed'], color=palette('slate'), linewidth=0.5, drawstyle='steps-mid')

# Show total dedicated capital line

ax3.axhline(y=total_capital, color=palette('sage-dark'), linewidth=1.5, linestyle='-',

label=f'Total dedicated: ${total_capital:,}')

avg_deployed = port.loc[port['capital_deployed'] > 0, 'capital_deployed'].mean()

ax3.axhline(y=avg_deployed, color=palette('rust'), linewidth=1, linestyle='--',

label=f'Avg when deployed: ${avg_deployed:,.0f}')

ax3.set_ylabel('Capital ($)')

ax3.set_title(f'Daily Capital Deployment (${MARGIN_PER_PAIR:,}/pair Reg T margin)')

ax3.yaxis.set_major_formatter(plt.FuncFormatter(lambda x, _: f'${x:,.0f}'))

ax3.set_ylim(0, total_capital * 1.15)

ax3.set_xlim(port['dt'].min(), port['dt'].max())

ax3.legend(loc='upper center', bbox_to_anchor=(0.5, -0.18), ncol=3,

frameon=True, fancybox=False, fontsize=9, columnspacing=1.6,

handlelength=2.0, borderpad=0.6)

# Constrained layout (above) auto-fits titles/legends/labels — no manual margins needed.

# Add a touch of breathing room around the entire figure.

fig.get_layout_engine().set(w_pad=0.05, h_pad=0.06, hspace=0.05, wspace=0.05)

plt.savefig('portfolio_overview.png', dpi=110, bbox_inches='tight', facecolor='white')

plt.show()